Frameworks

Over four decades I have developed a set of structured approaches to problems that I found conventional methods handled poorly. These frameworks are practical tools, not academic constructs. Each one was refined through repeated use in real organisations under real constraints.

These are not proprietary black boxes; they make the thinking visible and involve the client in the process so they feel it is being done with them, not to them! Clients can continue to use and adapt these approaches long after an engagement ends.

QBAM — Question Based Architecture Model

Placeholder content. This section will describe the QBAM framework.

QBAM is a structured approach to building an enterprise architecture through guided discovery rather than top-down documentation. Rather than beginning with frameworks and templates, it begins with questions: questions that draw out what stakeholders actually know, believe, and need.

The output is standard enterprise architecture material — current and target state, roadmaps, standards and principles — but arrived at through a process that builds consensus and ownership, not just artefacts.

It was developed and refined across engagements in financial services, public sector, professional services and more.

POSM — Probability of Success Matrix

Placeholder content. This section will describe the POSM framework.

The Probability of Success Matrix is a diagnostic tool for projects and programmes that are in difficulty or failing to deliver. It looks at 12 criteria known to correlate with success or failure, weighted to suit the nature of the investment and its stage in the lifecycle. While it will deliver a probability of success — quite eye-opening to some clients — its most valuable output is in highlighting and prioritising where improvements are needed.

Critically, POSM is designed to help leadership teams reach their own conclusions. Externally imposed diagnoses are often resisted; conclusions a team arrives at through facilitated, structured reflection are far more likely to produce action.

The tool can be applied quickly — often in a single facilitated session — and produces a clear, prioritised picture of what needs to change.

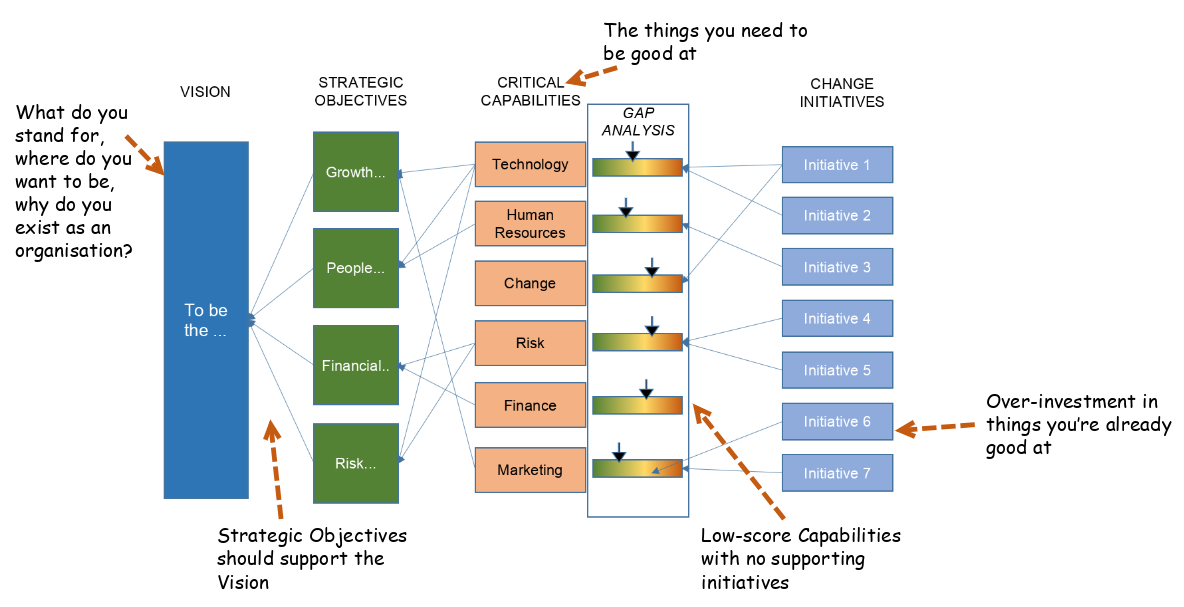

LITSO — Linking Investment to Strategic Objectives

LITSO maps the connection between technology and change investment and the strategic objectives it is meant to serve. On a single page — A3 in complex cases — it organises four columns left to right: Vision, Strategic Objectives, Critical Capabilities, and Change Initiatives. Everything the organisation is trying to achieve, and everything it is spending money on to get there, visible simultaneously.

The Critical Capabilities column is the pivot. Rather than drawing lines directly from initiatives to objectives — which most organisations can already do nominally — LITSO inserts a harder question: what does the organisation actually need to be good at in order to achieve its strategic objectives? Answering that honestly, in a facilitated workshop, is where the real work happens. The resulting list of capabilities becomes the analytical backbone of everything that follows.

Each capability is then rated on two dimensions: how critical it is, and the organisation's current capability level. Right-to-left, each initiative is linked to the capabilities it addresses. Left-to-right, each objective is traced through to its supporting capabilities and the initiatives behind them. The gaps this reveals are rarely comfortable: substantial investment directed at areas of existing strength; critical capability gaps with nothing behind them; strategic objectives with no supporting initiatives at all.

LITSO has been applied across financial services, public sector and international organisations — from investment banking to the DVLA. It works wherever leadership needs a single, honest picture of whether their investment portfolio is actually pointed at their strategy.

ABCD — A pragmatic assumption-based risk methodology

Placeholder content. This section will describe the ABCD framework.

ABCD (I recall that it originally stood for assumption-based continuity dynamics) isn't mine; I adopted it from some external training while at EDS in the 2000's, and I've been using it quite successfully ever since. It's a risk methodology built around the explicit identification and management of assumptions. Most project risk approaches catalogue what might go wrong; ABCD starts earlier, asking what must be true for the approach to succeed (positive assumptions) and then tracks whether those things remain true.

When an assumption is classified as unlikely to be true with high impact, it is treated as a risk, with two follow-on questions: 1. How much would it cost to make this assumption likely to be true (or, if I can't spend my way out of it, how much might it cost me if the assumption proves to be false)? 2. When do I need to spend this money? This approach outputs a fairly accurate Contingency Plan, with how much should be allocated to manage risk and when it may be needed.

ABCD is deliberately lightweight. It is designed to be adopted by teams without specialist training and maintained as part of normal programme governance. It doesn't ask project people to juggle the difference between Risks, Assumptions, Issues and Dependencies (traditional RAID logs).